RAPPOR (Randomized Aggregatable Privacy-Preserving. Ordinal Response) defines a method which can analyse data while preserving the privacy of it

RAPPOR |

Bloom Filters

Before we look at RAPPOR, let's look at Bloom filters. For this we take a number of hash values of a string, and then set the corresponding bits for the Bloom filter. For example:

01234567890123456789012345678901

Add fred: 00000000000000100000010000000000 fred [21,14]

Add bert: 00000000100000100000010000000100 bert [29,8]

Add greg: 00000000100100100000011000000100 greg [11,22]

If we now search for "fred" we will see that bits 21 and 14 are set, so "fred" may be in the Bloom filter. If we try We now have bit position 8, 11, 14, 21, 22 and 29 set.

Now we can test for amy:

amy is not there [16,12]

New we can test for greg:

greg may be in there [11,22]

and here is a video presentation:

RAPPOR (Randomized Aggregatable Privacy-Preserving. Ordinal Response)

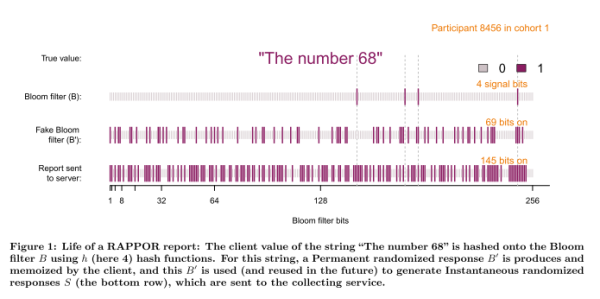

RAPPOR uses hashes and adding noise. For this we have a k-bit Bloom filter. There are then three steps to the gathering of information.

Signal. This involves taking the message and hashing it with a number of hashing methods, (h) and setting bits on a Bloom filter (B).

Next we have a Permanent randomized response (PRR), which will be memorized on the system. With this for each of bits (0 to k-1), and we create a fake Bloom filter, which is set as:

B_fake[i] = 1 for a probability of 0.5 x f

B_fake[i] = 0 for a probability of 0.5 x f

B_fake[i] = B[i] for a probability of 1 - f

So if f = 0.5, we will set with a fake 0 for 1-in-4 chance, a fake 1 for 1-in-4 chance, and the actual bit in the Bloom filter (B) for 1-in-2. This is equivalent to having a coin flip, where if it is head, we will lie about the result, but if it is tails we will tell the truth.

This will create a fake Bloom filter with noise (based on a coin flip), where half the time we will fake the bit, and the other time we will put the correct answer into the Bloom filter. This bit array is memorized for future reports.

When required to read the data, we perform the Instantaneous randomized response (IRR), which takes B_fake and creates S using:

If the bit in B_fake is set to 1, the corresponding bit in S is set to 1 with probability q. If the bit in B_fake is set to 0, the corresponding bit in S is set to 1 with probability p.

Google illustrate the process as follows [here]:

With this we have input data values of:

client,cohort,value 1,24,'hello' 1,24,'hello' 2,34,'goodbye' 1,43,'hello' 1,40,'help'

and it produces:

client,cohort,bloom,prr,irr 1,24,1000101000100000,0001001000110000,1011011011111100 1,24,1000101000100000,0001001000110000,1001001011001001 2,34,0000000001000111,0000000001011110,1000011100100111 1,43,0001000000100001,0101001110110011,1001001010101000 1,40,0010010000010000,0001110100011010,0101011111100010

We can see for the same client and cohort that we produce the same Bloom filter (1000101000100000), but the PRR has noise applied to it (0001001000110000). Finally the IRR value differs, even though we have the same input (1011011011111100 and 1001001011001001). In this way we cannot infer the input values.

If we use freq=1, all the bits will be random:

client,cohort,bloom,prr,irr 1,24,1000101000100000,0001011001111001,0011011010111000 1,24,1000101000100000,0001011001111001,0010100101110100 2,34,0000000001000111,1000100111011100,0000000011100011 1,43,0001000000100001,0101111111010111,0111000101100101 1,40,0010010000010000,0001100110101010,1000101111101011

If we look in more detail at the first entry, we see the bits that have changed (where an X is change) and where we see that 8 are changed and 8 or not (so we see a random pattern):

1000101000100000 0001011001111001 X--XXX---X-XX--X

and with f=0.5, we have a 1 in 2 chance of a fake bit:

client,cohort,bloom,prr,irr 1,24,1000101000100000,0001001000110000,1001101011111100 1,24,1000101000100000,0001001000110000,0111101011111000 2,34,0000000001000111,0000000001011110,0011111111111110 1,43,0001000000100001,0101001110110011,0011010010101011 1,40,0010010000010000,0001110100011010,1011100101011001

This time we only see four bits changing:

1000101000100000 0001001000110000 X--XX------X----

and with freq=0 we will get all of the bits from B coming through, and where there will be no random bits in B_fake:

client,cohort,bloom,prr,irr 1,24,1000101000100000,1000101000100000,1010101100000111 1,24,1000101000100000,1000101000100000,0000010111111000 2,34,0000000001000111,0000000001000111,1101010010001011 1,43,0001000000100001,0001000000100001,0000001011010111 1,40,0010010000010000,0010010000010000,1010111011001001

Example

Here we go ... you have 10 friends on Facebook, and you want to know the split of male v female. So how can we do this without actually asking them to tell you their gender?

Well, we get them to toss a coin. If it is heads, they should lie, else if it is a tails they should tell the truth. So here we go:

Friend 1 (Male). Tosses coin: Tails. Tell: Male (Truth!) Friend 2 (Male). Tosses coin: Heads. Tell: Male (Lie!) Friend 3 (Male). Tosses coin: Heads. Tell: Female (Lie!) Friend 4 (Female). Tosses coin: Tails. Tell: Female (Truth!) Friend 5 (Female). Tosses coin: Heads. Tell: Female (Lie!) Friend 6 (Female). Tosses coin: Heads. Tell: Male (Lie!) Friend 7 (Male). Tosses coin: Tails. Tell: Male (Truth!) Friend 8 (Male). Tosses coin: Tails. Tell: Male (Trust!) Friend 9 (Male). Tosses coin: Tails. Tell: Male (Truth!) Friend 10 (Female). Tosses coin: Heads. Tell: Male (Lie!)

Note: a lie has a 50/50 chance of being male or female.

So the number of heads are the same as tails (which is expected), and we have 6 males, and 4 females from the results, and this is the same as the cohort, but we can't tell who was actually telling the truth or not. This is the basis for methods of privacy within Big Data analysis, where we can lie (or add noise).